Innovation Law and Regulation Series: Defending Data from Silicon Eyes

- Sofia Grossi

- Nov 5, 2023

- 20 min read

Updated: Sep 15, 2024

Foreword

Artificial Intelligence systems are set to be the next revolution, forever changing humans’ lives. This new phenomenon and its many effects will cause great changes in our society, which is why regulating is the first step toward ethical development. In fact, unregulated use of these technologies could give rise to negative consequences such as discriminatory uses and disregard for privacy rights. The challenges brought by the use of Artificial Intelligence urge legislators and experts to protect citizens and consumers as regulating becomes a priority if humans wish to protect themselves from unethical and abusive conduct. This series explores the topic of new technologies such as artificial intelligence systems and their possible regulations through legal tools. In order to do so, we will start with an explanation of the rise of new technologies and delve into the complicated question of whether machines can be considered intelligent. Subsequently, the interplay between Artificial Intelligence and different branches of law will be analyzed. The first chapter of this series of articles explored the possibility of granting A.I. systems with legal personality and the main legislative steps taken in the EU towards that direction. Moving into the realm of civil law the second chapter considered the current debate on the responsibility regime concerning the use and production of A.I. The third chapter will discuss the influence that A.I. plays on contract law and the stipulation of smart contracts. The use of A.I. in criminal law and the administration of justice was examined in the previous installment with a focus on both the positive and negative implications of their use. The fifth chapter was dedicated to the use of Artificial Intelligence by public sector bodies and how new technologies could improve the field of administrative law. Finally, the present chapter illustrates the complicated relationship between data protection, privacy, and A.I. in light of the EU General Data Protection Regulation.

The series is divided into six articles:

Innovation Law and Regulation Series: Recognizing Silicon Minds

Innovation Law and Regulation Series: AI on Trial, Blaming the Byte

Innovation Law and Regulation Series: Navigating Smart Contracts

Innovation Law and Regulation Series: Defending Data from Silicon Eyes

Innovation Law and Regulation Series: Defending Data from Silicon Eyes

Privacy is a concept that dates back to the philosophy of ancient Greeks and could be described as a general interest to shield one’s life from the public (DeCew, 2015). Nowadays, the concept of privacy has naturally adapted to the new contexts in which people live. As a result, privacy has also come to terms with the new technologies that are becoming more relevant and intrusive. Therefore, Artificial Intelligence, a prime example of those new technologies, and the concept of privacy have a complicated relationship which is being scrutinized by scholars as part of a new and fascinating debate. The purpose of this article is to shed a light on the aforementioned debate to illustrate the most critical points of convergence between Artificial Intelligence and privacy. In order to do so, the first part of this article will illustrate and explain the concept of privacy and the legislative evolution of the right to privacy within the European legal framework. Subsequently, the focus will shift to the main areas of intersection between Artificial Intelligence and privacy concerns, namely the use of machine learning systems by public and private authorities with reference to the urbanization of cities, the administration of law enforcement, and the proceedings of criminal investigations. Moreover, possible solutions will be considered throughout the development of the mentioned topics as a potential way forward to improve the relationship between Artificial Intelligence and privacy rights. In the last part general remarks will be given.

Privacy Over the Centuries

Greek philosopher Aristotle made a distinction between public and private life, first drawing a significant line between what is public and what is not. Centuries later ancient Romans referred to the legal concept of jus respiciere to describe unwanted intrusions into their personal lives (DeCew, 2015). This shows how relevant the topic of privacy has been throughout history. During the Middle Ages, the interest in privacy rights kept expanding as made evident by the notable number of cases being brought before judges against people eavesdropping or reading personal letters (Holvast, 2009). In all cases, despite the law still being unclear on the matter, people kept showing a significant interest in how their private spheres of life could receive protection. However, a real interest and formal recognition of privacy only came around in the United States in the last years of the nineteenth century (Holvast, 2009). American lawyers Louis Brandeis and Samuel Warren published what it is still considered as the first publication advocating for the right to privacy (Glancy, 1979). The article, titled “The Right to Privacy”, was published in 1890 in the Harvard Law Review and articulated the concept of the right to be left alone (Warren & Brandeis, 1890). The two authors were both lawyers educated at Harvard Law University. Brandeis went on to serve as an associate justice on the United States Supreme Court. Brandeis and Warren explained that existing laws were not enough to effectively protect the right to privacy and, therefore, the right to be protected against others’ invasions (Warren & Brandeis, 1890). In fact, the laws concerning slander and liber were regarded as insufficient given that they only dealt with cases in which one's reputation has suffered a concrete damage (Warren & Brandeis, 1890).

However, starting from intellectual property law, which protects the content of writings and other means of artistic expression, the two others come to the conclusion that there is a concept of privacy (Warren & Brandeis, 1890). From that reasoning, the article concludes that the right to privacy can be understood as the right to be left alone and, thus, refuse interference by external agents (Warren & Brandeis, 1890). The publication of the article is referred to as a revolution that led the governmental and judicial institutions of the United States to rethink how the right to privacy was conceived and protected. Alan F. Westin, American Public Law professor at Columbia University, defined privacy as "the claim of individuals, groups, or institutions to determine for themselves when, how, and to what extent information about them is communicated to others" (Westin, 1967). The formal recognition of the right to privacy has become more and more relevant up to today when the current debate surrounding privacy has become central and of widespread interest, even without a precise definition of what privacy is. In fact, as Anne Branscomb, an American computer and communication lawyer, said, “The good news about privacy is that eighty-four percent of us are concerned about privacy. The bad news is that we do not know what we mean” (Branscomb, 1994).

The legal recognition of privacy came about in the 20th century when multiple international conventions recognized human rights and, amongst them, the human right to privacy. The most evident being Article 12 of the Universal Declaration of Human Rights (United Nations, 1948), Article 17 of the International Covenant on Civil and Political Rights (United Nations, 1966), Article 8 of the European Convention of Human Rights (Council of Europe, 1950) and Article 7 of the Charter of Fundamental Rights of the European Union (EU Charter, 2000). A relevant element that these provisions have in common is the fact that, not only privacy is recognized as a right to which all humans are entitled, but a right which is protected against interferences by both private and public parties. However, these provisions fail to enunciate a clear definition of privacy and are worded as principles rather than clear provisions on how privacy can and cannot be interfered with. As the borders of the right to privacy were still blurry, many cases were brought before international courts for lack of understanding by ordinary judges on how to apply those provisions (Frantziou, 2014). A prime example and leading case being the Google Spain one.

In the Google Spain case before the European Union Court of Justice, Mr. Mario Costeja González complained that a Google search related to his name still displayed two property auction notices for the recovery of social security debts that Mr. Costeja González had owed 16 years earlier (Frantziou, 2014). The applicant aimed to secure an order that Google should either remove or hide the links to those pages. The Spanish High Court referred to the European Union Court of Justice to give a preliminary ruling on the interpretation of Articles 7 and 8 of the EU Charter of Fundamental Rights (Frantziou, 2014). In light of those two provisions, the judges of the European Union Court of Justice ruled that Google acted as a controller of the processing of personal data as provided by Article 8 of the EU Charter of Fundamental Rights. Furthermore, the Court stated that the data subject may request, in the light of his fundamental rights under Articles 7 and 8 of the EU Charter, that the information in question no longer be made available to the general public by its inclusion in such a list of results (Frantziou, 2014). Consequently, the right to privacy was once again formalized as a general fundamental right capable of limiting the actions of others which might negatively affect one’s private sphere of life. Even more extraordinary was the fact that judges recognized the damaging role that technology might have on one’s privacy.

Privacy concerns and rights were subject to a great deal of attention in 2016 when a pivotal change of direction was made by European Union institutions following the judgment given by the European Union Court of Justice. The crafting of a detailed regulation centered on the protection of people’s right to privacy made it clear that it is unthinkable to live in a world where one is not entitled to have a full recognition of the right to privacy. The issue of the General Data Protection Regulation ensured that all Member States of the European Union applied the Regulation in completeness without the need to transpose its provisions (Voigt & Von Dem Bussche, 2017). This ensures effective application and legal certainty in 28 countries, with extraordinary consequences for individuals who can now expect a high degree of protection of their privacy. The main objective of the General Data Protection Regulation is to protect the personal data of all people and it defines personal data as any information regarding the person either directly or indirectly (Voigt & Von Dem Bussche, 2017). The broad definition strengthens even more the efficiency and scope of application of the General Data Protection Regulation making it possible to reconcile a vast list of activities as processing of personal data. The General Data Protection Regulation lists the main duties of the controller and the processor and ensures that their duties reflect the main rights to which the data subject, that is to say the individual, is entitled to. Amongst the rights of the data subject the General Data Protection Regulation includes the right to access, the right to rectification, the right to erasure, the right to restrict processing, the right to data portability, the right to object and the right not to be subject to a decision based solely on automated processing (Voigt & Von dem Bussche, 2017).

The Interplay between Privacy and Artificial Intelligence

As mentioned above, Artificial Intelligence technology is on its way to become more and more relevant with extraordinary consequences expected in every aspect of people's lives. Society is already witnessing and experiencing revolutionary changes such as self-driving cars or tools able to suggest what music to play based on people’s tastes and needs (Greenblatt, 2015). It is likely that developments of this sort will increase exponentially over the span of just a few years and change how mundane tasks are carried out. However, new machines have made their way into the most poignant aspects of our lives such as law, healthcare, and economic activities, other than more mundane and ordinary tasks (Greenblatt, 2015). It is now time to reflect on the concrete examples of how Artificial Intelligence and privacy come together and may undermine the importance of the protection of privacy. The next paragraphs will illustrate three aspects and areas in which privacy rights might be sacrificed by the use of technology.

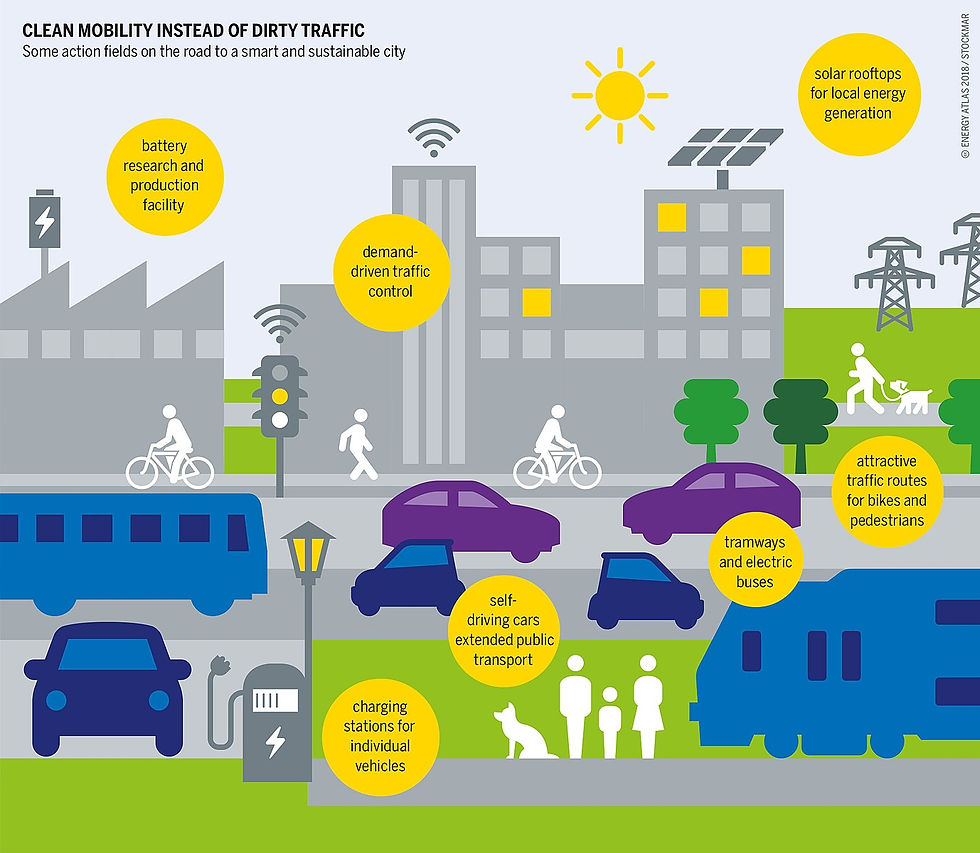

Smart Cities: From Urban Efficiency to Mass Surveillance

Amongst the primary objectives of a good territorial administration, it is inevitable that efficiency comes to mind. That is the reason why smart cities are becoming a trend in many countries with the objective of making citizens' lives easier and more accessible in many aspects. Smart cities, often hailed as the future of urban development, are built on an intricate web of interconnected technologies and data-driven solutions (Batty et al, 2014). Thus, smart cities are a prime example of how innovation can change the very essence of human lives. These cities rely on constant data flow to optimize services like traffic management, waste collection, and energy consumption (Batty et al, 2014). However, the collection of data, especially personal information, can infringe upon individual privacy and raise concerns about whether the activities carried out by public authorities and private developers comply with privacy rights and regulations. Location data, for instance, can be used to track an individual's movements, raising doubts of legitimacy when it comes to what might be a constant surveillance of individuals violating the individual right to freedom of movement (Article 12, International Covenant on Civil and Political Rights). Furthermore, the constant use of technology surveillance tools may lead to other human rights violations such as interference with the right to private life (Article 8, European Convention on Human Rights). On another note, the collection of big data without appropriate safety measures could expose personal data to potential threats by hackers with the chance of information being shared without consent and legitimate rules (Zetter, 2016). Particularly, articles 13 and 14 of the General Data Protection Regulation require controllers of the processing to ensure that third parties do not receive personal data unless it is made sure that they comply with all provisions contained in the Regulation. Furthermore, in democratic societies, the interference by public authorities in private spheres of life should be as minimized as possible to comply with the multiple provisions of international law protecting human rights as inviolable. For this reason, it seems unlikely that the implementation of Artificial Intelligence systems all over cities could not arise to a violation of the fundamental human freedom to privacy (Wernick, Banzuzi & Mörelius-Wulff, 2023).

The only way forward would be the true and concrete application of Article 25 of the General Data Protection Regulation which establishes that the controller, the individual deciding the means and purposes of the processing of personal data, who would in this case be the public authority and private developers of technologies, shall implement technologies that comply with human rights and privacy rights (Wernick, Banzuzi, Mörelius-Wulff, 2023). However, this does not solve the lack of regulation on the creation of smart cities and might remain a vague command with no practical application. China is the country in which the practical unfolding of the above-mentioned scenarios can be found. The beginning of China's smart city project can be traced back to the mid-1990s, with the aim to develop informational infrastructure all over the country, highlighting the beginning of an urban renewal with the help of new technologies (Jang & Xu, 2018). China underwent great changes because of the use of these new technologies and changed how cities were conceived. In 2011, the Chinese Government released its 12th Five-Year Plan outlining the projected future of China and the expected course of action for its economic development (Jang & Xu, 2018). The 12th point of this plan explicitly mentions and provides for the creation and implementation of smart city policies (Jang & Xu, 2018). From that moment on, there have been incredible expansions of technological applications in smart cities achieving astonishing results in terms of efficiency. In fact, on the one hand, the smart city project in China contributes to solving contemporary urban issues such as rationing the use of energy and natural resources and providing adequate and up-to-date infrastructure availability. As a result, this approach has a significant potential to improve the quality of life for citizens. However, on the other hand, the technical requirements for the operation of smart cities, relying on indiscriminate big data collection and analysis, come at the expense of citizen's control over their personal data.

In May 2019, security researcher John Wethington found a Chinese smart city database accidentally accessible from a web browser and downloaded parts of it (Whittaker, 2019). This gave an insight on how smart cities are actually the justification to process immense amounts of data and monitor citizens. The database contained pictures of citizens recorded by facial recognition systems and was classified based on ethnical background, gender, age, and criminal records (Whittaker, 2019). This shows a clear violation of the provisions of the General Data Protection Regulation which prohibits the processing of personal data for the purposes of profiling (Article 22, General Data Protection Regulation). The latter is defined as “any form of automated processing of personal data consisting of the use of personal data to evaluate certain personal aspects relating to a natural person, in particular to analyse or predict aspects concerning that natural person’s performance at work, economic situation, health, personal preferences, interests, reliability, behaviour, location or movements” (Article 4, General Data Protection Regulation). Furthermore, the General Data Protection Regulation provides that the storage of personal data shall not be excessive in terms of duration (Article 5, General Data Protection Regulation).

Predictive Crime Systems: From Safety to Illegitimate Data Processing

In recent years, public authorities have also started relying on new technologies to better carry out their tasks. Police forces are no different and, in their role of maintaining security and protecting public order against the commission of crimes, they have made use of Artificial Intelligence tools (Sierra-Arévalo, 2019). Activists, scholars, legal professionals, journalists, and academics think that promise can be found in this renewed scenario in which there is increased visibility and it is expected to contribute to enhanced accountability and a decrease in the illegitimate and disproportionate use of force by police officers (Brucato, 2015). This is a prime example of how technology can positively affect how law and law agents operate. Recently, the Arizona-based company Taser upgraded the first generation of body cameras which now can upload recorded videos directly to the cloud storage (Brucato, 2015). Despite the praise for the expected increase in transparency and efficiency, criticisms have arisen in terms of the future implications of police cameras for individuals. Skeptical critics and academics, like Jay Stanley, an American senior policy analyst, are concerned about privacy aspects for people who are recorded by those systems. Given that police officers often enter homes and places where people have a claim and a right to privacy, body cameras should not be used without explicit and informed consent by individuals. However, it is not always possible to request consent, such as in situations of urgency where immediate action is required. The result is that private information and personal data is not only recorded, but stored in cloud systems with no clear notions of how it could be used.

Another concern is that those body cameras will soon be upgraded with more sophisticated examples of Artificial Intelligence systems. This could lead to cameras being able to profile the faces of people police officers come in contact with, violating the above-mentioned prohibition of profiling provided by the General Data Protection Regulation. It could also lead to discriminatory uses since Artificial Intelligence systems are trained on datasets which could be tampered with and affected by biased data and information. Furthermore, it could lead to scenarios in which police officers are mere agents of the decisions taken by technological machines and amount to what is known as automated decision-making. An example would be the use of systems like HART (Highway Addressable Remote Transducer.) The system has been employed by the UK police to identify and assess the risk of future offending and to provide those people, classified as "moderate-risk", with the opportunity to participate in a rehabilitation programs (Oswald, Grace, Urwin & Barnes, 2018). The system, explicitly designed for custody officers, could amount to automated decisions given that there is no certainty that a human will be kept in the loop of the decision process. However, the General Data Protection Regulation provides that automated decisions can be made only if the decision is based on explicit consent by the interested individual (Article 22, General Data Protection Regulation). Where that is the case, the controller shall provide the individual with enough and precise information about the processing, simple ways to request human intervention and methods to challenge a decision. Furthermore, the controller shall carry out regular checks to make sure that your systems are working as intended (Article 22, General Data Protection Regulation). According to the Law Enforcement EU Directive of 2016, States can limit the scope of application of the provision of the General Data Protection Regulation when automated decisions are carried out for the purposes of the prevention, investigation, detection, or prosecution of criminal offenses or the execution of criminal penalties. In those cases, automated decisions can be taken without explicit individual consent, but with authorization by a national law and effective safeguards for the rights and freedoms of the data subject (Directive (EU) 2016/680). It is evident that European Union legislators chose to balance the different interests at stake: repression of crimes, on the one hand, and privacy rights, on the other hand. However, the result is still a great sacrifice for privacy. The fear is that, as declared by the European Court of Human Rights, in time people will "undermine or even destroy democracy under the cloak of defending it" (European Court of Human Rights, decision n. 308/2018).

Criminal Investigations: From Efficiency to Storage of Personal Data

In the realm of the administration of justice and the exercise of criminal action, public authorities are invested with the power to carry out investigations and, thus, exercise criminal action. It is important to note that they have to do so with the objective of gaining evidence in an effective and quick fashion. In fact, it is a principle generally shared by most legal frameworks around the world that the pillar of a good administration of justice is the efficiency of criminal investigations (Deslauriers-Varin & Fortin, 2021). This entails that when prosecutors begin criminal investigations their objective is to do so in a manner that is efficient. One way forward to ensure better and more efficient investigations, is to consider the use of technology (Costantini, De Gasperis & Olivieri, 2019). However, many problems may arise from that. Some of that has been considered in previous articles of this series, such as potential violation of human rights in terms of discrimination and biased decisions. In this occasion, it is fundamental to highlight potential concerns for privacy rights (Owsley, 2015). In fact, the use of Artificial Intelligence during criminal investigations may lead to a general and massive storage of information which might be irrelevant for the main objective of the investigations. In other words, despite not being useful for the assessment of the culpability of the suspect, information might be stored regardless. This might give rise to a violation of the provisions of the General Data Protection Regulation requiring processing to be consistent with the purpose for which personal data has been stored. Furthermore, the Directive (EU) 2016/68 requires that when the processing is carried out for the purposes of the prevention, investigation, detection or prosecution of criminal offenses or the execution of criminal penalties, it shall be relevant and not excessive at all times (Article 4, Directive EU/2016/680).

Another problematic aspect is represented by the employement of the so-called trojan horse technique that consists in using smart devices placed in the suspect’s computers and electronic devices (Owsley, 2015). This method takes its name after one of the most known tricks in history: the Trojan horse used by greek soldiers to enter the city of Troy. The intrusion into the private life of a person is not subject to specific regulations in most democratic legal frameworks despite representing a significant limitation to the right to privacy (Owsley, 2015). Moreover, Artificial Intelligent cameras and trackers, have been employed, not only by police officers to prevent crimes as mentioned in the previous paragraphs, but also by public authorities during investigations to detect the movements of the suspect in a given period (Cupa, 2013). In Italy, for instance, the use of the Artificial Intelligent system known as SARI Real Time has been at the center of a legislative and public debate after it was disclosed that the videos recorded have been used by public authorities when conducting criminal investigations. In particular, their use has been beneficial in keeping track of the movement of suspects given that the Artificial Intelligent system was able to record biometric information of a person and match his or her face to other recordings kept in databases (Christakis & Lodie, 2022). However, the General Data Protection Regulation and the Directive EU/2016/680 state that biometric data is part of the category known as a special category of data and for that reason, it must not be processed unless in accordance with the rights and freedoms of the individual (Article 10, Directive EU/2016/680 and Article 9, General Data Protection Regulation). Furthermore, the European Court of Human Rights has provided that the use of Artificial Intelligence systems must be subject to precise regulation and procedures provided by the law as they represent significant interferences with the right to privacy (ECHR, decision 8/2018).

As a result, it seems that the only way forward will be the intervention of a legislative reform which could finally achieve what the European Courts of Human Rights has demanded over the years: a precise regulation of the use of Artificial Intelligence systems (Haber, 2023). Effective policymaking is, in fact, crucial in setting boundaries for how technology can be used, especially when the unregulated utilization might lead to the sacrifice of the right to privacy. The need for a private sphere of life and the expectation to have a certain degree of privacy cannot go unheard and be subject to discretionary uses by police officers and public authorities as this would amount to a clear violation of legislation on privacy rights (Haber, 2023). The steps taken by the legislator are still vague and scattered, leaving the judges with the complicated task of creating a case-by-case regulation which is in evident contrast with the theory of the separation of powers first introduced by Rousseau and now shared among civil law systems (Waldron, 2013). Effective legislation on the uses of Artificial Intelligence by public and private agents would also ensure the right and full application of the General Data Protection Regulation which risks being a muffled warning with no consequences.

Conclusions

In conclusion, the use of Artificial Intelligence has brought to unexpected consequences. On the one hand, this series has illustrated the extraordinary results of these new technologies in terms of efficiency. On the other hand, this article has illustrated the downside of Artificial Intelligence systems and the risks that their usage might lead to when it comes to the protection of privacy. Despite not being a clear and straightforward concept, privacy has been recognized as a fundamental human right after being included in multiple international and European conventions on human rights and freedoms. Subsequently, the intervention of legislators has made even more clear how important it is that every individual can protect his or her information. However, this analysis has shown that the uses of Artificial Intelligent technologies in various fields, ranging from urbanization to law enforcement and criminal proceedings, seriously endanger the privacy of the people involved. It is, therefore, essential that effective legislation keeps being produced by competent bodies to ensure that the right to privacy does not become a vague memory of the times when technology was not a constant and inevitable part of life.

Bibliographical References

Batty, M., Axhausen, K. W., Giannotti, F. et al. (2012). Smart cities of the future. Eur. Phys. J. Spec. Top, 214, 481–518. https://doi.org/10.1140/epjst/e2012-01703-3

European Court of Human Rights. Ben Faiza v. Francia, n. 8/2018.

European Court of Human Rights. Big Brother Watch v. United Kingdom, n. 308/2018.

Branscomb, A. W. (1994) Who Owns Information? From Privacy to Public Access. Basic Books, New York.

Brucato, B. (2015). Policing Made Visible: Mobile Technologies and the Importance of Point of View. Surveillance & Society 13(3/4), 455-473. http://library.queensu.ca/ojs/index.php/surveillance-and-society/index| ISSN: 1477-7487

Christakis, T. and Lodie, A. (2022). The Conseil d’Etat Finds the Use of Facial Recognition by Law Enforcement Agencies to Support Criminal Investigations “Strictly Necessary” and Proportional. European Review of Digital Administration & Law 3(1), 159-165. ISSN 2724-5969 - ISBN 979-12-2180-078-4 - DOI 10.53136/9791

Cook, K. L. (1977). Electronic tracking devices and privacy: see no evil, hear no evil, but beware of trojan horses. Loyola University of Chicago Law Journal, 9(1), 227-247.

Costantini, S., De Gasperis, G. & Olivieri, R. (2019). Digital forensics and investigations meet artificial intelligence. Ann Math Artif Intell 86, 193–229. https://doi.org/10.1007/s10472-019-09632-y

Cupa, B. (2013). Trojan horse resurrected - On the legality of the use of government spyware. In Webster, C., William R. (eds.), Living in surveillance societies: The state of surveillance: Proceedings of LiSS conference. CreateSpace Independent Publishing Platform.

Deslauriers-Varin, N., Fortin, F. (2021). Improving Efficiency and Understanding of Criminal Investigations: Toward an Evidence-Based Approach. J Police Crim Psych 36, 635–638. https://doi.org/10.1007/s11896-021-09491-6

Frantziou, E. (2014). Further Developments in the Right to be Forgotten: The European Court of Justice's Judgment in Case C-131/12, Google Spain, SL, Google Inc v Agencia Espanola de Proteccion de Datos. Human Rights Law Review, 14(4), 761–777. https://doi.org/10.1093/hrlr/ngu033

Glancy, J. D. (1979). The Invention of th Right to Privacy. Arizona Law Review, 21, 1–39.

Greenblatt, J. B., Shaheen, S. (2015). Automated Vehicles, On-Demand Mobility, and Environmental Impacts. Curr Sustainable Renewable Energy Rep, 2, 74–81.

https://link.springer.com/article/10.1007/s40518-015-0038-5

Haber, E. (2023). The Law of the Trojan Horse. UC Davis Law Review. https://ssrn.com/abstract=4400283

Oswald, M., Grace, J., Urwin, S. & Barnes, C. G. (2018) Algorithmic risk assessment policing models: lessons from the Durham HART model and ‘Experimental’ proportionality. Information & Communications Technology Law, 27(2), 223-250. DOI: 10.1080/13600834.2018.1458455

Owsley, B. L. (2015). Beware of government agents bearing trojan horses. Akron Law Review, 48(2), 315-348.

Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation) [2016] OJ L 119/1

Sierra-Arévalo, M. (2019), Technological Innovation and Police Officers' Understanding and Use of Force. Law & Society Rev, 53: 420-451. https://doi.org/10.1111/lasr.12383

Voigt, P. & von dem Bussche, A. (2017). The EU General Data Protection Regulation (GDPR). Springer.

Wagner DeCew, J. (1997). In pursuit of privacy: Law, ethics, and the rise of technology. Cornell University Press.

Waldron, J. (2013). Separation of powers in thought and practice. Boston College Law Review, 54(2), 433-468.

Wernick, A., Banzuzi, E., Alexander, M. (2023). Do European smart city developers dream of GDPR-free countries? The pull of global megaprojects in the face of EU smart city compliance and localisation costs. Internet Policy Review, 12(1), 1-45. https://doi.org/10.14763/2023.1.1698

Westin, A. (1967). Privacy and Freedom. Atheneum

Yang, F, Xu, J. (2018). Privacy concerns in China's smart city campaign: The deficit of China's Cybersecurity Law. Asia Pac Policy Stud. 5, 533–543. https://doi.org/10.1002/app5.246

Zetter, K. (2016). Inside the cunning, unprecedented hack of ukraine’s power grid. Wired. http://www.wired.co

Visual Sources

Figure 1: Lysippos. (330 BC). Bust of Aristotle. [Photograph]. Retrieved from https://commons.wikimedia.org/wiki/File:Aristotle_Altemps_Inv8575.jpg

Figure 2: West, V. (2014). The Man who sued Google in the Google Spain Case. [Photograph]. Retrieved from https://www.nytimes.com/2014/05/30/business/international/on-the-internet-the-right-to-forget-vs-the-right-to-know.html

Figure 3: Notman, W. (1875). American lawyer Samuel Warren. [Photograph]. Retrieved from https://commons.wikimedia.org/wiki/File:Samuel_Dennis_Warren_by_William_Notman,_c1875.jpg

Figure 4: Harris & Ewing. (1916). American lawyer Louis Brandeis. [Photograph]. Retrieved from https://en.wikipedia.org/wiki/File:Brandeisl.jpg

Figure 5: Energy Atlas. (2018). Smart cities of the future. [Illustration]. Retrieved from https://energytransition.org/2018/04/europe-must-choose-a-green-future/

Figure 6: Facial recognition system. (2005). [Photograph]. Retrieved from https://commons.wikimedia.org/wiki/File:Eigenfaces.png

Innovative approaches to regulation and privacy help companies and users feel more confident. In this context, it is important for IT services to provide not only high-quality solutions, but also prompt support. For example, the experience of interaction with Apex Technology Management customer service shows how important professional assistance is when working with modern technologies and data security issues.